March, 2025 | Smart Agricultural Technology |

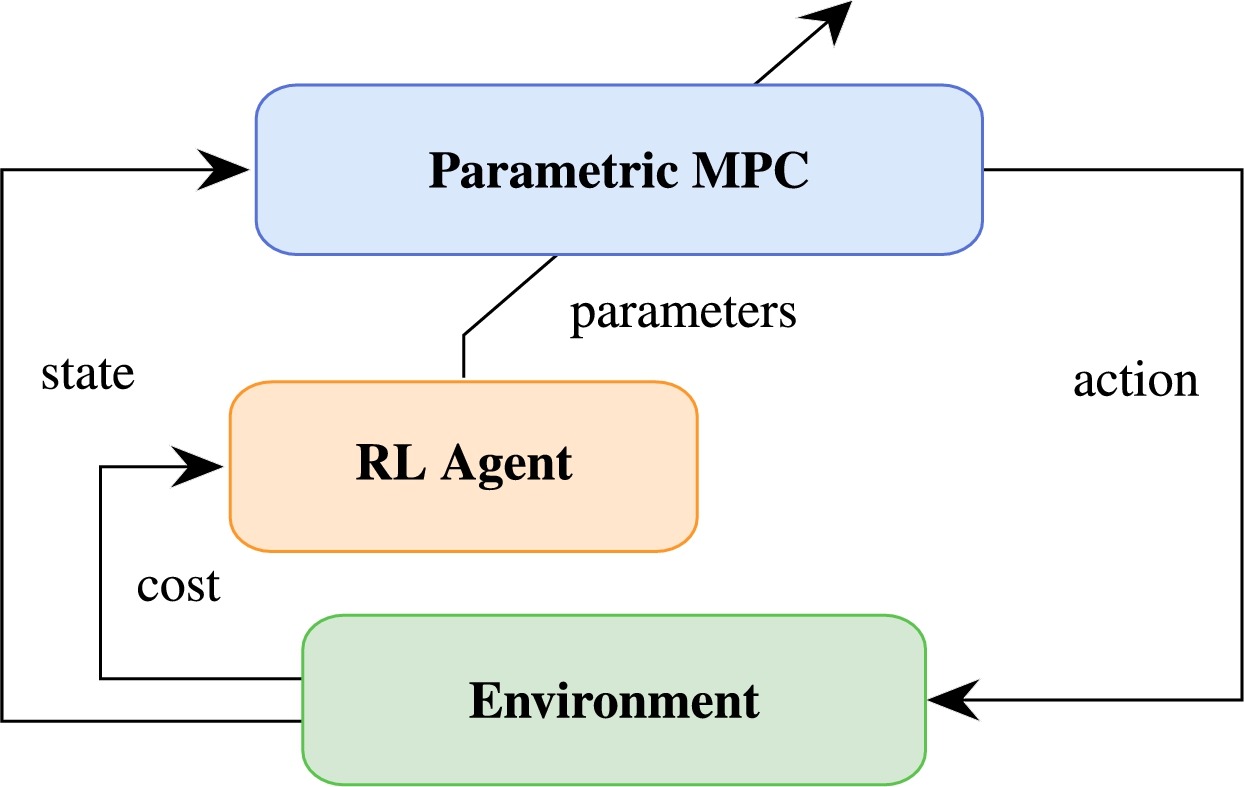

Introduction: Model predictive control (MPC) is a promising approach for greenhouse climate management, but its reliance on accurate prediction models makes it vulnerable to uncertainties from modelling errors and inaccurate weather forecasts. Existing robust or stochastic MPC methods address this uncertainty but often reduce crop yield due to conservatism and increase computational loads. Researchers from Delft University of Technology and Wageningen University in the Netherlands proposed a combined MPC and reinforcement learning (RL) framework in which a parametrized MPC scheme learns directly from data to optimize constraint satisfaction and climate control performance under prediction uncertainty.

Key findings: Simulations using a lettuce greenhouse model showed that the proposed MPC-RL controller significantly reduced constraint violations compared to nominal MPC, robust MPC, and deep RL (DDPG) controllers. After 100 growth cycles of training, indoor CO2 levels no longer violated bounds, and humidity violations were reduced to brief, infrequent exceedances. The approach maintained crop yield comparable to an ideal MPC controller without the conservatism-induced yield losses seen in robust MPC methods with higher sample counts. Economic profit indicators also favored the MPC-RL approach over DDPG, which maximized yield inefficiently relative to control inputs. Computation times were similar to standard MPC approaches and substantially lower than robust MPC with multiple samples. The authors noted that unlike black-box deep neural network controllers, the MPC-based RL approach allows interpretation of learned parameters, providing transparency for growers. Future work includes application to alternative greenhouse models and experimental validation in real-world settings.

Figure | Diagram of the MPC-based RL architecture.